Palantir, Power, and the Rhetoric of Fear

It did not earn its notoriety through ethical innovation or corporate responsibility. Instead, it has become synonymous with mass surveillance, ethically dubious software, and a leadership that seems to embrace controversy.

Lately, Palantir has been in the spotlight, but not for reasons that reflect well on the company. It did not earn its notoriety through ethical innovation or corporate responsibility. Instead, it has become synonymous with mass surveillance, ethically dubious software, and a leadership that seems to embrace controversy.

CEO Alex Karp, in particular, has a habit of doubling down with provocative statements such as:

I want less war. You only stop war by having the best technology and by scaring the bejabers — I'm trying to be nice here — out of our adversaries. If they are not scared, they don't wake up scared, they don't go to bed scared, they don't fear that the wrath of America will come down on them, they will attack us. They will attack us everywhere.

For anyone familiar with Palantir's work, this rhetoric is not surprising. It is consistent with the company's trajectory. Palantir is behind tools like ELITE, a new software platform used by U.S. Immigration and Customs Enforcement (ICE) to identify and target neighbourhoods for immigration raids, as revealed in an investigation by 404 Media. According to their reporting—which drew from internal ICE material and testimony from an official—ELITE aggregates massive amounts of public and private data and generates entire dossiers on individuals based on predictive criteria, often without the subjects' knowledge.

Agents have described it as a specialized version of Google Maps, but instead of finding restaurants, it locates human targets. Officers can either select specific people or draw a perimeter on a map to see who might be inside. It assigns a "confidence score" for how likely it is that a target actually resides at a given location based on data sourced from the Department of Health and Human Services (HHS) and other government agencies. The Electronic Frontier Foundation (EFF) documented this conduit and found that individuals' addresses from Medicaid records fed directly into ICE's targeting algorithms. This represents a particularly disturbing data flow: healthcare enrolment information—originally collected for social services—is repurposed for immigration enforcement.

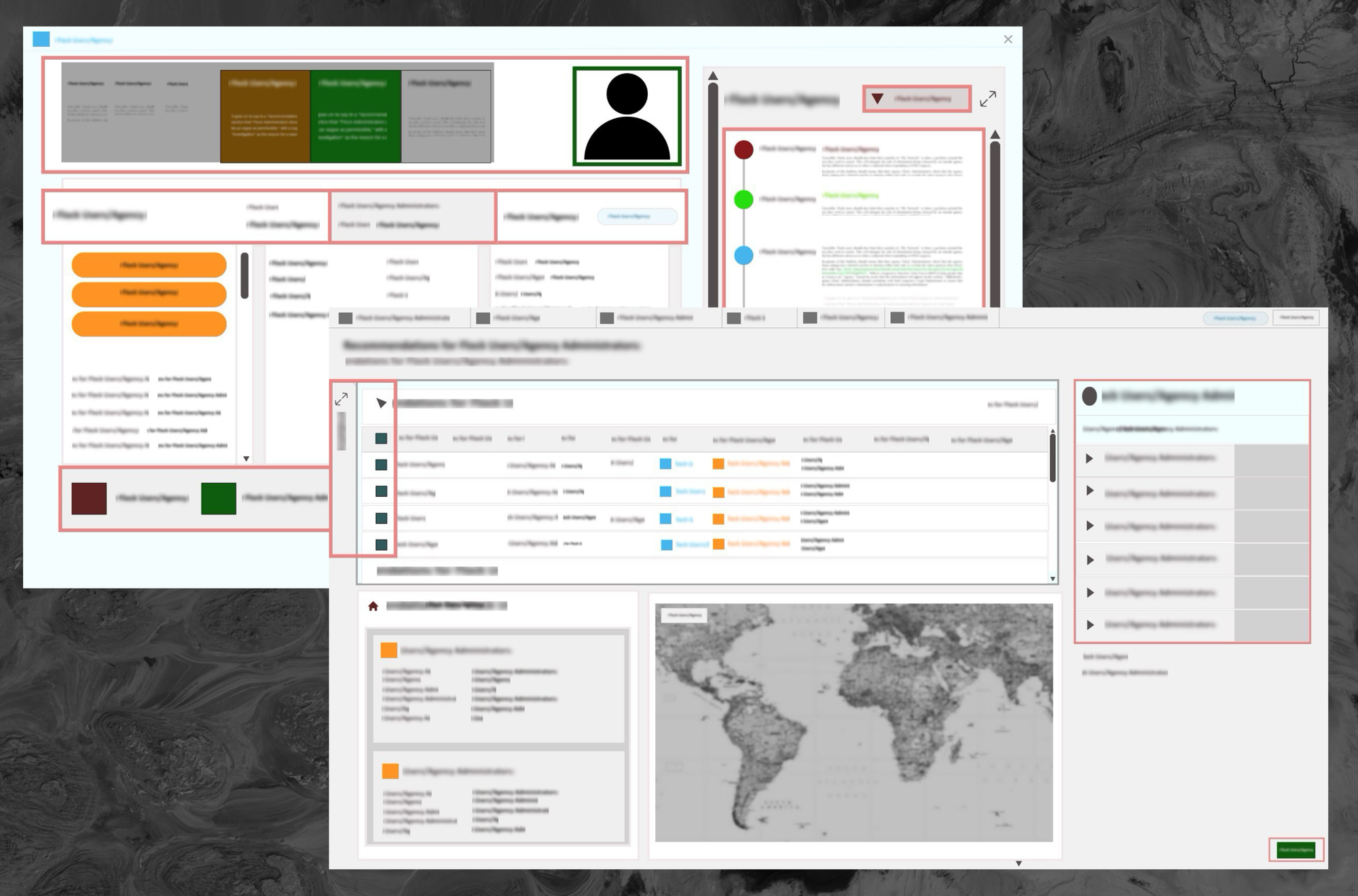

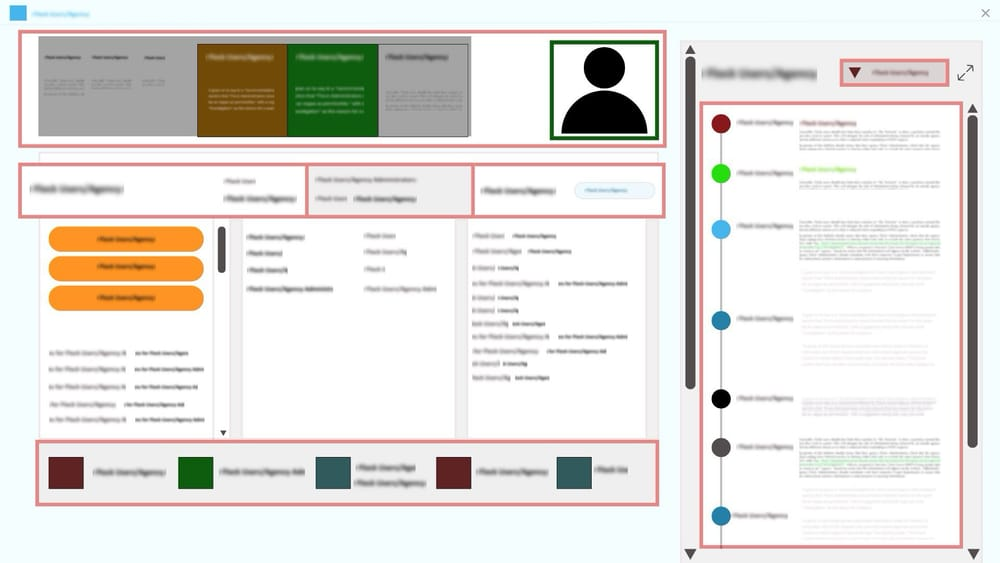

404 Media obtained the full ELITE user guide, a document that lays bare how Palantir have seamlessly stitched surveillance, data analytics, and enforcement together. It is a stark illustration of how abstract data systems translate into real world harm. If you're interested in learning more about how these tools operate in practice, 404 Media's reporting is essential reading.

404 Media's investigation documented how ELITE has already been used by ICE to plan and execute raids. This exemplifies what critics call "data-driven deportation"—the automation of immigration enforcement through algorithmic targeting. Palantir responded to EFF's reporting by stating that ELITE is "a component of the capabilities provided under a previously reported pilot program with ICE," effectively confirming its existence while attempting to label it as routine government surveillance.

One OS To Rule Them All

ImmigrationOS represents the next evolution of this surveillance infrastructure. Another tool developed by Palantir, it is tightly integrated into ICE and Homeland Security Investigations (HSI) operations. ICE is reportedly paying $30 million for access to the tool, offering near real time visibility on people self-deporting from the United States, as reported by WIRED and Benzinga. Contract documents reviewed by 404 Media indicate Palantir's agreement runs through at least September 2027, with an initial ImmigrationOS prototype expected by September 25, 2025. The system centers on "Targeting and Enforcement Prioritization"—translating to real-time tracking of self-deportations and end-to-end lifecycle immigration management, from identification through final removal from the country.

Independent investigations by WIRED and Amnesty International detailed ImmigrationOS's extensive data integration. The system aggregates information across public and private boundaries, accessing license plate reads, travel and communication records, location tracking, legal and criminal histories, and detailed biometrics. The platform then uses this consolidated dataset to perform continuous monitoring and automated open-source intelligence. It applies machine learning to assign intent and emotional classifications to individuals' online behavior via sentiment analysis. Furthermore, WIRED notes that ImmigrationOS builds upon ICE's existing Palantir-maintained case management system (in use since 2014), significantly expanding the agency's data fusion capabilities.

According to Documented NY, Palantir has worked with the Department of Government Efficiency (DOGE) to integrate federal databases. The publication highlighted potential conflicts of interest, citing former Palantir employees currently at DOGE and advisor Stephen Miller's alleged stock ownership. Additionally, WIRED reports that Customs and Border Protection's planned expansion of real-time facial recognition could interface with Palantir's ImmigrationOS.

Advocacy groups have cited these data integrations as severe risks to civil liberties. Amnesty International called for the program to be halted following the arrest of Mahmoud Khalil—a former Columbia student protesting the war in Gaza. While the legal classification of specific arrests remains a subject of debate, such incidents have amplified public concern that consolidated federal data could be leveraged to unlawfully deport political dissidents across all immigration statuses.

A FALCON Stalks Its Prey

On the ICE tip lines, Palantir is licensing out their FALCON software, a tool to automatically summarize and categorize incoming phone and email tips, as well as providing translations for non-English tips. Data from these tip lines are then ingested into the Search & Analysis (FALCON SA) system, a separate but integrated tool which we only have knowledge of thanks to Freedom of Information Act (FOIA) requests from the Electronic Privacy Information Center (EPIC). EPIC's FOIA litigation revealed that HSI purchased perpetual licenses for 56 server cores from Palantir Technologies, allowing for the initial install and development of the FALCON system, which replaced several outdated information search and analysis systems previously used by HSI. According to the privacy impact assessment obtained by EPIC, it builds chronological timelines of a subject's movements and maps their geographic locations. It also generates visual charts to uncover hidden connections between people, organizations, places, and events. Finally, it converts raw, unorganized surveillance notes into a searchable database, allowing users to quickly scan large volumes of information.

EPIC's FOIA documents also reveal that FALCON integrates Bank Security Act data, trade data from both US and foreign partners, investigative records, and additional data sources not fully specified in public documents. EPIC's settlement of their FOIA lawsuit against ICE revealed that FALCON enables the linking of phone numbers, GPS data, and social network data with the database containing sensitive personal information and providing ICE with vast surveillance capabilities.

A specialized component, FALCON DARTTS (Data Analysis and Research for Trade Transparency System), is overseen by HSI's Trade Transparency Unit and contains financial and trade data with specific access controls, suggesting tiered surveillance capabilities within the broader FALCON architecture. Palantir's contracting documents obtained by EPIC reveal that Palantir has not authorized any other vendor to provide training services on Palantir or FALCON, creating a captive market where government agencies become dependent on Palantir not just for software but for operational knowledge. This is a structural barrier to switching vendors or reducing surveillance capabilities.

While FALCON has been customized for ICE, Palantir Gotham is the foundational platform that powers these operations. Originally developed for military and intelligence use, Gotham is now marketed openly with disturbing candor about its capabilities. Palantir's own marketing materials describe Gotham as supporting "soldiers with an AI-powered kill chain, seamlessly and responsibly integrating target identification and target effector pairing." This "kill chain" terminology refers to the military decision cycle of find, fix, track, target, engage, assess. The ICE List Wiki, maintained by immigration advocates, documents that FALCON is "HSI's customized implementation of Palantir Gotham, used for federated search and analytics across multiple law enforcement data environments," confirming that Gotham's military-grade capabilities have been directly adapted for immigration enforcement.

Palantir's white paper on AI-Enabled Operations elaborates that Gotham enables users to "integrate and exploit massive scale data and deploy proven AI to warfighters," with data, user expertise, and AI-generated insights coming together in near real-time to improve situational awareness, anticipate possible outcomes, and suggest courses of action. Gotham's technical documentation states the platform enables "the autonomous tasking of sensors, from drones to satellites, based on AI driven rules or manual inputs for humans in the loop control," meaning the system can automatically deploy surveillance assets based on algorithmic triggers, reducing human judgment in surveillance initiation.

Gotham Europa, the European variant, is marketed to intelligence and law enforcement agencies around the world, with the same core capabilities deployed in military contexts available to domestic police and immigration enforcement.

Multiple police departments have used Gotham for crime analysis. BuzzFeed News documented that more than half of all Los Angeles Police Department (LAPD) officers had accounts on Palantir in 2016, with those officers running 60,000 searches per month. The system included 1 billion pictures taken of license plates from traffic lights and toll booths in Los Angeles and neighboring areas. If you've driven through Los Angeles since 2015, chances are good that the police can view when and where your car was photographed, and then click on your name to learn all about you.

Campaign Zero reported that Palantir brings military-grade surveillance and predictive policing tools into local law enforcement. The Conversation noted that Palantir's work with the federal government using the Gotham platform is amplifying surveillance capabilities across agencies. Civil liberties organizations including the American Civil Liberties Union (ACLU) have criticized this use as predictive policing. Built In reported that when police rely on AI-generated "threat scores," they may be acting on inaccurate information, reinforcing systemic bias and violating individuals' constitutional rights, according to the ACLU. ACLU Massachusetts stated that the Trump administration has empowered big tech surveillance companies like Palantir and Babel Street to aggregate Americans' personal data into massive databases.

(Seeing as Palantir seems determined to use pop-culture references for their product names, why not simply call themselves Omni Consumer Products? No matter, palantír is probably just as apt.)

Gotham's aforementioned capabilities are enabling law enforcement and military operators to gain broad understanding of targets without traditional warrant requirements. The Princeton Tory reported that Palantir's data centralization plan will allow the US government to access comprehensive profiles of citizens. In Germany, the Organisation for Economic Co-operation and Development (OECD) AI documented that the Baden Württemberg parliament approved police use of Palantir's AI-powered Gotham software for advanced data analysis starting in 2026, with critics warning of potential privacy violations, data misuse, and dependency on a US company.

Automate Everything

Palantir's commercial platform Foundry extends these surveillance capabilities into corporate and civilian sectors. The core innovation is what Palantir calls "The Foundry Ontology," a system that integrates the semantic, kinetic, and dynamic elements of your business to empower teams to harmonize and automate decision making in complex settings. In Palantir's usage, an ontology is a formal representation of entities, their properties, and relationships within a domain, enabling connecting data, analytics, and business teams to a common foundation. In surveillance contexts, this means creating unified data models of human subjects, their attributes, relationships, movements and behaviors that can be queried and analyzed algorithmically.

Foundry Rules provides pre-configured surveillance rules for continuous monitoring, with the system able to generate alerts based on data patterns. Palantir's documentation describes how Foundry enables "binding streaming data to ontology's objects and actions for closed loop action," meaning incoming surveillance data immediately updates subject profiles and can trigger automated responses without human review. Foundry's documentation explains that this ontology approach enables "connecting data, analytics, and business teams to a common foundation," and in surveillance contexts, this means creating unified data models of human subjects that can be queried and analyzed algorithmically.

Zero Hedge reported that Foundry is already deployed across at least four federal agencies: Defense, Homeland Security, Health and Human Services, and Education—with the same platform used for corporate analytics being applied to government surveillance. This "ontology" approach allows seamless translation between commercial and enforcement contexts. Zero Hedge further reported that since Donald Trump took office, Palantir has received over $113 million in government spending. WinBuzzer noted that Palantir deepened government ties by partnering with Accenture Federal Services to train and certify at least 1,000 workers on Palantir's Foundry software and AI technology.

Palantir's AIP (Artificial Intelligence Platform) launched in 2023, bringing large language models (LLMs) and generative AI to these surveillance infrastructures. The platform allows customers to "run LLMs and other models on [their] private network with full control." Palantir's AIP documentation describes a comprehensive suite for building AI-powered surveillance and enforcement applications. AIP Logic enables the development of production ready AI-powered workflows, agents, and functions. AIP Agent Studio is for creating autonomous AI agents that can execute tasks. AIP Evals is for evaluating and refining AI system performance. And AIP Automate is for triggering automated actions based on AI outputs.

AIP's integration with Automate allows "Ontology edits to be automatically applied or staged for human review," in enforcement contexts this means AI systems can automatically update subject profiles, generate alerts, or trigger operational responses with human review being optional rather than mandatory. Palantir's Automate documentation explains that Automate integrates natively with Foundry applications including AIP Logic, Notepad, Object Explorer, Ontology Manager, and Time series, allowing automations to trigger on existing objects or when new objects are created.

AIP supports models from xAI, OpenAI, Anthropic, Meta, and Google, a multi model approach meaning surveillance and enforcement agencies aren't locked into a single AI provider and can select or combine models based on capabilities, cost, or specific surveillance tasks. AIP Assist is "an LLM-powered support tool" that allows users to "ask AIP Assist questions in natural language and receive real time help," meaning in surveillance contexts, analysts can query databases using conversational language rather than structured queries, lowering the technical barrier to complex surveillance operations. Yahoo Finance reported that AIP Assist allows users to ask natural language questions about Palantir's documentation by combining natural language processing (NLP) and third party LLM.

AIP Analyst is "an interface for agentic workflows that lets you use natural language to perform ad hoc analyses across your ontology." You can ask AIP Analyst a question, and the agent will answer by autonomously searching your ontology, creating object sets, and transforming data before generating summaries and visualizations. Wissly AI documented that AIP Assist ensures users receive data backed answers with clear source attribution and access control.

Palantir emphasizes that AIP provides "robust access controls, encryption, and auditing capabilities to maintain data integrity and transparency," with built in governance tools to help organizations maintain accountability. Palantir's AI ethics documentation outlines how AIP supports eight core themes of responsible AI development. However, these technical safeguards address data security rather than ethical governance. They ensure only authorized users can access surveillance data, but don't restrict what surveillance can be conducted. Palantir's privacy whitepaper states that their approach to privacy and governance engineering blends security, privacy, compliance, and regulatory standards alongside technical architecture, but critics note that privacy by design does not mean surveillance by consent.

Piecing It All Together

What makes these systems particularly scary is not merely their individual capabilities, but their deliberate architectural integration. These are not separate products operating in isolation. They form a unified surveillance-to-enforcement pipeline, with each component feeding into and enhancing the others.

The pipeline begins with Foundry and Gotham, which serve as the data ingestion engines. Palantir's documentation on cross application interactivity confirms that "all Gotham applications and many Foundry applications implement some form of cross-application interactivity." Type mapping documentation reveals that users can "create new Gotham types based on existing Foundry object types, property types, and shared property types which remain synchronized as an Ontology evolves over time." This means the same data models, the same surveillance ontologies, flow seamlessly between military intelligence platforms and domestic enforcement systems.

Once data is ingested, the Ontology creates unified subject models combining biometric, biographic, behavioral, and relational data. These profiles are not static records. They are living data structures that update in real time as new information streams in. Palantir's platform overview describes how "Palantir's application development framework enables enterprises to build operational workflows and develop use cases that leverage user actions, alerting, and other end user frontline functions in collaboration with tool-wielding, data-aware AIP Agents."

Analysis and targeting then flow through FALCON SA, ELITE, and ImmigrationOS, which apply algorithmic analysis to identify targets and predict behaviors. These tools do not merely present data to human analysts. They generate operational recommendations. ELITE's "confidence scores" are not neutral probabilities. They are decision aids that direct enforcement resources toward specific human beings. ImmigrationOS's automated open source intelligence capabilities conduct sentiment analysis and pattern recognition without human intervention.

AIP then automates pattern recognition, natural language processing of communications, and predictive modeling at scale. Palantir's platform overview describes how "AIP is part of an AI Mesh that can deliver the full gamut of AI driven products, from LLM powered web applications to mobile applications using vision language models to edge applications that embed localized AI." This mesh connects everything: drones, satellites, license plate readers, cell tower data, social media feeds, and government databases into a single responsive system.

Operational execution then occurs through automated alerts, geofenced targeting, and algorithmic scoring that enables enforcement action. And crucially, the feedback loop means each enforcement action generates new data that refines the algorithms. A raid produces new addresses, new associations, new patterns of movement. This data feeds back into the Ontology, improving the next round of targeting. The system learns from its own operations, becoming more efficient at finding and apprehending human beings with each iteration.

This architecture transforms immigration enforcement from a human-driven investigative process into a data-driven industrial operation. One where the scale of surveillance and enforcement is limited only by computational capacity, not by human resources or ethical judgment. The same infrastructure that Palantir markets to utilities for "wildfire prevention, customer notifications, emergency response, and daily meteorology analysis, leveraging large scale ML driven weather forecasting, AI-enabled inspections, and Internet of Things (IoT) sensors" is deployed against immigrant communities. The same "closed loop action" that optimizes supply chains optimizes deportation pipelines.

What This Means

Palantir's tools have fueled some of the largest raids on immigrant communities in history. The company's platforms centralize data that can include our known addresses, phone numbers, driving records, credit history, immigration history, health data, and social media presence all in one place. What makes these systems particularly dystopian is their feedback loop nature: each enforcement action generates new data that refines the algorithms, creating increasingly precise targeting capabilities. The confidence scores and predictive analytics normalize the treatment of human beings as data points to be tracked and apprehended.

[...] while we may not have reached the world of RoboCop or "Minority Report" just yet, the use of AI in law enforcement is rapidly moving from fiction to reality. The speed at which my speculative articles have been overtaken by real-world developments is a stark reminder of the pace of technological change. - Judge Scott Schlegel

Public documents have revealed that Palantir has constructed an unprecedented, vertically integrated surveillance infrastructure spanning from sensor deployment to automated decision-making. Using the same underlying technology, platforms like Gotham and ImmigrationOS support operations ranging from military action to immigration enforcement. Documented capabilities include autonomous sensor tasking, real-time ontology updates, AI-powered "kill chains," and population-scale sentiment analysis. By their own description, these systems "illuminate migrants' movements," automate the "end to end immigration lifecycle," and deploy "proven AI to warfighters."

This architecture represents a fundamental shift in state power. These are not neutral tools, but infrastructures of control designed to optimize surveillance and enforcement. Because these systems are already operational, funded, and deployed across federal agencies with contracts extending beyond 2027, the critical question is no longer what Palantir can build, but what limits—if any—will govern their active operations.